Working with open hardware

Some weeks ago at LinuxCon EU in Barcelona I talked about how to use QEMU to improve the reliability of device drivers.

At Igalia we have been using this for some projects. One of them is the Linux IndustryPack driver. For this project I virtualized two boards: the TEWS TPCI200 PCI carrier and the GE IP-Octal 232 module. This work helped us find some bugs in the device driver and improve its quality.

Now, those two boards are examples of products available in the market. But fortunately we can use the same approach to develop for hardware that doesn’t exist yet, or is still in a prototype phase.

Such is the case of a project we are working on: adding Linux support for this FMC Time-to-digital converter.

|

This piece of hardware is designed by CERN and is published under the CERN Open Hardware Licence, which, in their own words “is to hardware what the General Public Licence (GPL) is to software”.

The Open Hardware repository hosts a number of projects that have been published under this license.

Why we use QEMU

So we are developing the device driver for this hardware, as my colleague Samuel explains in his blog. I’m the responsible of virtualizing it using QEMU. There are two main reasons why we want to do this:

- Limited availability of the hardware: although the specification is pretty much ready, this is still a prototype. The board is not (yet) commercially available. With virtual hardware, the whole development team can have as many “boards” as it needs.

- Testing: we can test the software against the virtual driver, force all kinds of conditions and scenarios, including the ones that would probably require us to physically damage the board.

While the first point might be the most obvious one, testing the software is actually the one we’re more interested in.

My colleague Miguel wrote a detailed blog post on how we have been using QEMU to do testing.

Writing the virtual hardware

Writing a virtual version of a particular piece of hardware for this purpose is not as hard as it might look.

First, the point is not to reproduce accurately how the hardware works, but rather how it behaves from the operating system point of view: the hardware is a black box that the OS talks to.

Second, it’s not necessary to have a complete emulation of the hardware, there’s no need to support every single feature, particularly if your software is not going to use it. The emulation can start with the basic functionality and then grow as needed.

The FMC TDC, for example, is an FMC card which is in our case connected to a PCIe bridge called SPEC (also available in the Open Hardware repository).

We need to emulate both cards in order to have a working system, but the emulation is, at the moment, treating both as if they were just one, which makes it a bit easier to have a prototype and from the device driver point of view doesn’t really make a difference. Later the emulation can be split in two as I did with with TPCI200 and IP-Octal 232. This would allow us to support more FMC hardware without having to rewrite the bridging code.

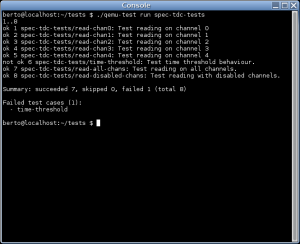

There’s also code in the emulation to force different kind of scenarios that we are using to test if the driver behaves as expected and handles errors correctly. Those tests include the simulation of input in the any of the lines, simulation of noise, DMA errors, etc.

|

And we have written a set of test cases and a continuous integration system, so the driver is automatically tested every time the code is updated. If you want details on this I recommend you again to read Miguel’s post.

Pingback: Cluttered Neurons » Continuous integration and testing, driver development and virtual hardware: the FMC TDC experience

Pingback: 7th White Rabbit workshop | Memorias de un telemático