- Introduction

- Installation

- Configuration of icecc scheduler

- Setup on icecc nodes

- Execution

- Icecream monitor

- Acknowledgments

Introduction

One of the big issues I have when working on Turnip driver development is that when compiling either Mesa or VK-GL-CTS it takes a lot of time to complete, no matter how powerful the embedded board is. There are reasons for that: typically those board have limited amount of RAM (8 GB for the best case), a slow storage disk (typically UFS 2.1 on-board storage) and CPUs that are not so powerful compared with x86_64 desktop alternatives.

Photo of the Qualcomm® Robotics RB3 Platform embedded board that I use for Turnip development.

Photo of the Qualcomm® Robotics RB3 Platform embedded board that I use for Turnip development.

To fix this, it is recommended to do cross-compilation, however installing the development environment for cross-compilation could be cumbersome and prone to errors depending on the toolchain you use. One alternative is to use a distributed compilation system that allows cross-compilation like Icecream.

Icecream is a distributed compilation system that is very useful when you have to compile big projects and/or on low-spec machines, while having powerful machines in the local network that can do that job instead. However, it is not perfect: the linking stage is still done in the machine that submits the job, which depending on the available RAM, could be too much for it (however you can alleviate this a bit by using ZRAM for example).

One of the features that icecream has over its alternatives is that there is no need to install the same toolchain in all the machines as it is able to share the toolchain among all of them. This is very useful as we will see below in this post.

Installation

Debian-based systems

$ sudo apt install icecc

Fedora systems

$ sudo dnf install icecream

Compile it from sources

You can compile it from sources.

Configuration of icecc scheduler

You need to have an icecc scheduler in the local network that will balance the load among all the available nodes connected to it.

It does not matter which machine is the scheduler, you can use any of them as it is quite lightweight. To run the scheduler execute the following command:

$ sudo icecc-scheduler

Notice that the machine running this command is going to be the scheduler but it will not participate in the compilation process by default unless you ran iceccd daemon as well (see next step).

Setup on icecc nodes

Launch daemon

First you need to run the iceccd daemon as root. This is not needed on Debian-based systems, as its systemd unit is enabled by default.

You can do that using systemd in the following way:

$ sudo systemctl start iceccd

Or you can enable the daemon at startup time:

$ sudo systemctl enable iceccd

The daemon will connect automatically to the scheduler that is running in the local network. If that’s not the case, or there are more than one scheduler, you can run it standalone and give the scheduler’s IP as parameter:

sudo iceccd -s <ip_scheduler>

Enable icecc compilation

With ccache

If you use ccache (recommended option), you just need to add the following in your .bashrc:

export CCACHE_PREFIX=icecc

Without ccache

To use it without ccache, you need to add its path to $PATH envvar so it is picked before the system compilers:

export PATH=/usr/lib/icecc/bin:$PATH

Execution

Same architecture

If you followed the previous steps, any time you compile anything on C/C++, it will distribute the work among the fastest nodes in the network. Notice that it will take into account system load, network connection, cores, among other variables, to decide which node will compile the object file.

Remember that the linking stage is always done in the machine that submits the job.

Different architectures (example cross-compiling for aarch64 on x86_64 nodes)

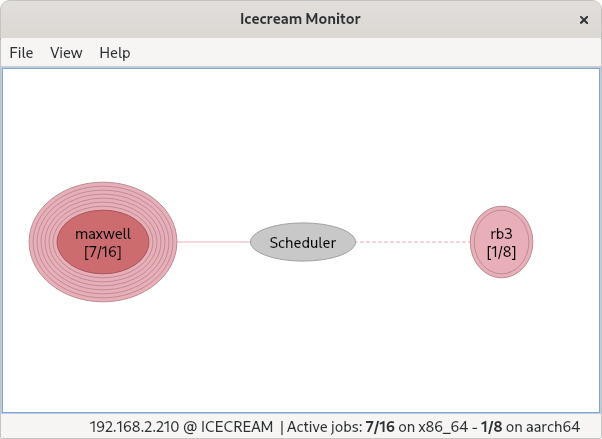

Icemon showing my x86_64 desktop (maxwell) cross-compiling a job for my aarch64 board (rb3).

Icemon showing my x86_64 desktop (maxwell) cross-compiling a job for my aarch64 board (rb3).

Preparation on x86_64 machine

In one x86_64 machine, you need to create a toolchain. This is not automatically done by icecc as you can have different toolchains for cross-compilation.

Install cross-compiler

For example, you can install the cross-compiler from the distribution repositories:

For Debian-based systems:

sudo apt install crossbuild-essential-arm64

For Fedora:

$ sudo dnf install gcc-aarch64-linux-gnu gcc--c++-aarch64-linux-gnu

Create toolchain for icecc

Finally, to create the toolchain to share in icecc:

$ icecc-create-env --gcc /usr/bin/aarch64-linux-gnu-gcc /usr/bin/aarch64-linux-gnu-g++

This will create a <hash>.tar.gz file. The <hash> is used to identify the toolchain to distribute among the nodes in case there is more than one. But don’t worry, once it is copied to a node, it won’t be copied again as it detects it is already present.

Note: it is important that the toolchain is compatible with the target machine. For example, if my aarch64 board is using Debian 11 Bullseye, it is better if the cross-compilation toolchain is created from a Debian Bullseye x86_64 machine (a VM also works), because you avoid incompatibilities like having different glibc versions.

If you have installed Debian 11 Bullseye in your aarch64, you can use my own cross-compilation toolchain for x86_64 and skip this step.

Copy the toolchain to the aarch64 machine

scp <hash>.tar.gz aarch64-machine-hostname:

Preparation on aarch64

Once the toolchain (<hash>.tar.gz) is copied to the aarch64 machine, you just need to export this on .bashrc:

# Icecc setup for crosscompilation

export CCACHE_PREFIX=icecc

export ICECC_VERSION=x86_64:~/<hash>.tar.gz

Execute

Just compile on aarch64 machine and the jobs be distributed among your x86_64 machines as well. Take into account the jobs will be shared among other aarch64 machines as well if icecc decides so, therefore no need to do any extra step.

It is important to remark that the cross-compilation toolchain creation is only needed once, as icecream will copy it on all the x86_64 machines that will execute any job launched by this aarch64 machine. However, you need to copy this toolchain to any aarch64 machines that will use icecream resources for cross-compiling.

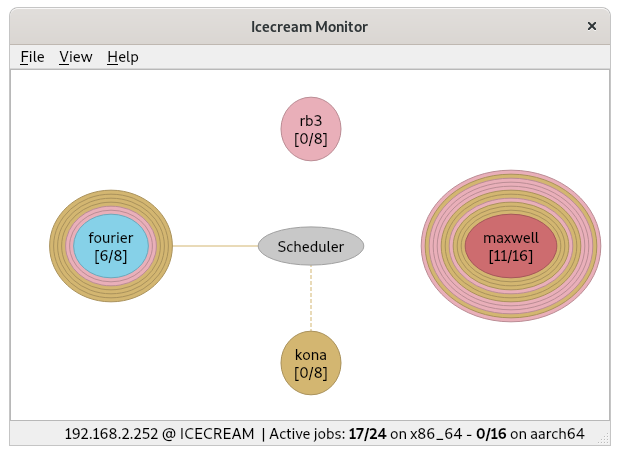

Icecream monitor

This is an interesting graphical tool to see the status of the icecc nodes and the jobs under execution.

Install on Debian-based systems

$ sudo apt install icecc-monitor

Install on Fedora

$ sudo dnf install icemon

Install it from sources

You can compile it from sources.

Acknowledgments

Even though icecream has a good cross-compilation documentation, it was the post written 8 years ago by my Igalia colleague Víctor Jáquez the one that convinced me to setup icecream as explained in this post.

Hope you find this info as useful as I did :-)