Why don't we do a demo? Part 1: the plan

Introduction

Some time ago, I saw myself with some extra time in my hands and I started experimenting with Zephyr as a way to reconnect with my professional past and also to see how embedded software looks like nowadays.

Initially, I had no further intentions beyond playing around a bit, gaining enough know-how to undertake typical embedded software projects and doing the occasional upstream contribution here and there, until a colleague told me "Now that you've spent some time with Zephyr, what do you think about doing a demo about it?". Not a bad idea. The goal is to have something to show at conferences and that showcases Zephyr's possibilities using a simple application.

At work, I'm not a specialist. What I do most of the time is basically one thing, and it typically doesn't fit in a specific field, area, or team: I solve problems 1. So this is an example of how to solve a single-sentence problem ("Let's do a demo") using whatever means necessary, involving software, hardware, planning, design, logistics, decision making and improvisation. It's also a personal expression of the importance, meaning and value of human work.

The following is a non-exhaustive list of the problems faced along the way and the solutions found.

Problem 1: the idea

The starting point is just a phrase: "Why don't we do a demo?", and a deadline. Nothing more. The amount of possibilities alone can already be an obstacle if we can't find a way to limit the solution space. Obviously, we'll find limitations and constraints down the road that will shape the final solution but, right now, everything is uncertainty.

What we want to show in the demo is the possibilities offered by Zephyr for embedded development using 100% open source software, how we can undertake complex application development with Zephyr, and show a variety of development cases within the same application.

Solution

There are may approaches to a technical demo. However, having been to conferences with demo booths, it's clear that the live and interactive demos are the ones that gather the most attention of the general public by a large margin. Regardless of the technical merits displayed in the demo, people are drawn to things they can touch, blinking lights, sounds, video games.

So a hard requirement since the beginning was that the demo should be interactive. Fortunately, the nature of the technology behind it lends itself to that easily, although I've seen many Zephyr-based demos that were rather static and only for display. The intention here is to allow the public to actually use it.

Another important thing to take into account is that widespread or hot technologies and buzzwords will be more attractive than obscure or niche terms. Fortunately, I'm building the demo from scratch, so I get to decide what to show. In this case, I picked up

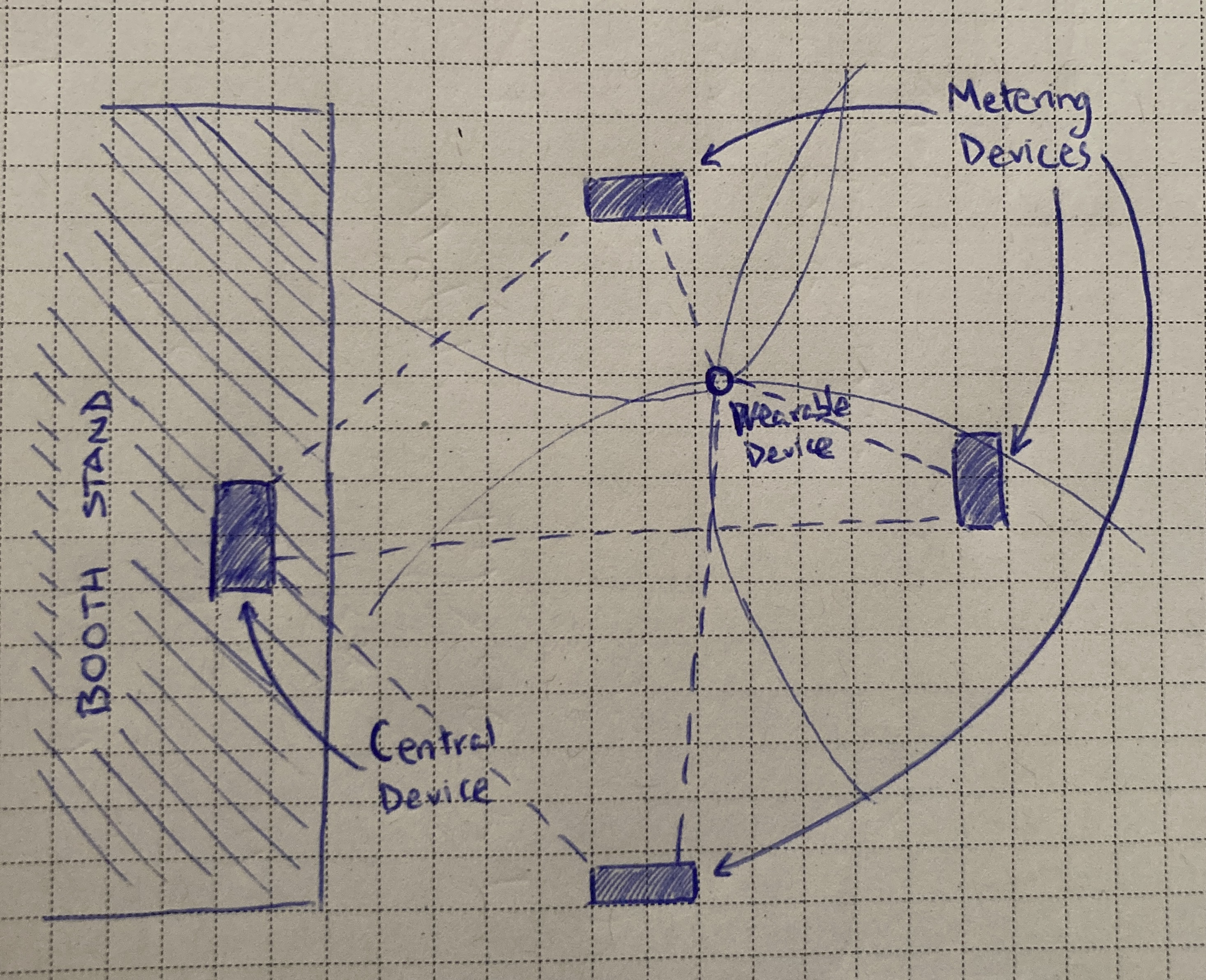

The goal, then, is to develop a hardware/software solution using Zephyr and its BLE stack, allowing interaction from the public and incorporating some way to display real-time information about it. The initial idea is to have small battery-powered devices in the demo booth and track their position using trilateration based on any available distance-measurement mechanism available in BLE devices, and have a central device that displays the position of the devices in real time.

Problem 2: selecting the hardware

Now that I settled on an initial idea, even if it's in a very rough and sketchy form, with no further technical details, I can start experimenting with the options. The first step is to do some research about the hardware and software possibilities to reach our goal, pick up some evaluation boards and start sketching ideas to have a better understanding of the feasibility of what I want to achieve and the limitations I can find (time, software/hardware constraints, skills, etc.)

Solution

A good option for BLE-based applications is to use some of the Nordic development kits. They're easy to source and inexpensive. Besides, the recent nRF54L15 SoC supports channel sounding, which promises precise distance estimations between devices. Just what I'm looking for.

I'll need two types of devices for this: one of them needs to

be small (wearable size, if possible) and battery-powered. The

other type will be at a fixed location and can have a cabled

power supply. The idea is to have three devices at fixed

locations in the booth measuring the distance to a number of

battery-powered devices that will be moving. Then, a central

device will collect the distance information from the metering

devices and use it to calculate the position of each

battery-powered device.

This central device will need to have some way to display the

position of the devices in some kind of graphical interface,

so I need to search for a device that can connect to the

metering devices, that is well supported in Zephyr and that

can support some kind of display out-of-the-box.

With all these requirements in mind I came up with this list of devices:

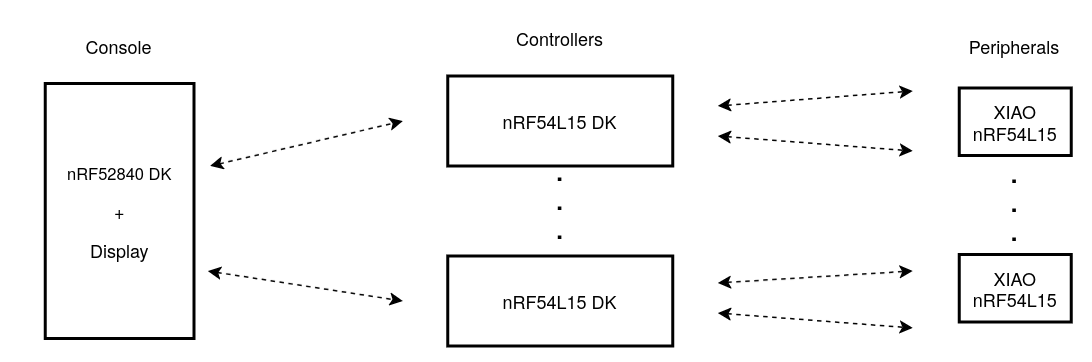

- For the battery-powered devices: Seeed Studio XIAO nRF54L15, based on the nRF54L15 SoC.

- For the metering devices: nRF54L15 DK boards from Nordic, also based on the nRF54L15.

- For the central device: nRF52840 DK board, which supports a serial SPI touchscreen like this one.

All the hardware is already supported in Zephyr, so that should eliminate a lot of the initial friction and save us time.

Problem 3: practical limitations, redefining the idea

After some initial experiments with the hardware, running sample BLE applications and getting familiar with the ecosystem, I found out that, while the nRF54L15 hardware supports Bluetooth channel sounding, the Zephyr BLE stack still doesn't support it, so in order to use it I'd need to use Nordic's SoftDevice controller instead of the upstream Zephyr controller, together with the nRF Connect BLE stack.

This is a problem because a key feature of this demo should be that it's done using 100% open source code and, preferably, upstream Zephyr code.

Another, even bigger obstacle, is that it's not clear that collecting distance data from multiple sources simultaneously for trilateration, and doing it for multiple peripherals at the same time, is practically viable. I couldn't find any examples or documentation on it, and I could be entering uncharted territory. Considering that we have a deadline for this, I'd rather find an alternative.

Solution

The immediate solution is to find a less audacious idea to develop using the same hardware that I already have, keeping it interactive but simpler, and keeping the same goals.

The idea I finally settled on is an extension of the typical BLE peripheral -- central application, where the peripheral publishes some services and the central device connects to it and issues GATT reads and writes to the peripheral characteristics, but adding a multi-level network topology instead of a simple star network, and adding real-time remote display and control of the devices using a graphical interface. So we'd have three device types: the battery-powered peripherals, which will provide the basic services, then the controller devices, which will connect to the peripherals to control them remotely, and then a console device which will connect to the controllers and can show and control the devices remotely using a graphical interface.

Zephyr supports BLE Mesh already, but we'd lose part of the challenge of implementing the networking routing ourselves, so I'm keeping things more interesting by implementing a custom tree topology that provides us with finer grained control, and which can be tailored to a specific application use case.

This means that the controller device will need to act both as a BLE central and peripheral device simultaneously, while the peripheral devices will act only as peripherals and the console will be only a central.

Problem 4: initial planning

With the development boards at hand, I can start designing and developing the firmwares for the three board types, including testing and documentation. The other certain thing I have right now is a deadline: the conference where we want to show the demo. Now I need to draw a rough plan with concrete dates.

Solution

Considering that I'll surely find a few bad surprises down the road and that there'll be uncertainty and problems that I can't yet anticipate, since it's the first time we're doing a demo with these characteristics, I set myself a personal hard deadline: one month before the real hard deadline. Ideally, the firmware should be all done and thoroughly tested one month before that, so that'd leave two full months for additional preparations and for sorting out whichever last-minute obstacles I could find in the end.

Of course, all of this rough planning is based purely on intuition. I could fall into the trap of wanting to plan everything beforehand and write a well-specified roadmap of everything that needs to be done in minute detail, but I'd be setting myself up for failure from the start, since 90% of the work ahead is a big question mark. I'm defining everything as we go, and in cases like this it's much more reasonable to plan and work based on different principles:

- Define reasonable and achievable milestones and iterate based on them.

- Iterate fast and as many times as needed.

- Re-draw the plan after an iteration if needed.

- Be ready to improvise.

- Improve incrementally and have faith in the process. Don't look at the top of the mountain, you know where it is. Focus on the next meter of path in front.

Doing this as a one-person-army has both pros and cons. Fear and uncertainty are something you have to shoulder on your own, but you're also free to take whatever decision you need whenever you need.

So, now we're ready to start developing. A rough milestones sketch for the firmware development could be:

- Base application for the peripheral: board setup and hardware handling.

- Base application for the controller device: board setup and hardware handling.

- Basic peripheral-central BLE application using the peripheral and controller devices.

- Base application for the console device: board setup and hardware handling.

- Make the controller device work as both a BLE peripheral and central device.

- Incorporate the console device to the peripheral + controller application.

- Graphical interface design and implementation.

Testing and simulation should be a part of every milestone.

In the next post we'll go through the firmware development part of the project.

1: I like to think that's a specialty, though. Maybe one day that'll be a role in the company.↩